Minnesota’s Youth Need a Better Path Forward on Chatbot Regulation

By Shane Galvin, a Minnesota-based consultant who helps businesses and nonprofits implement AI tools responsibly and effectively.

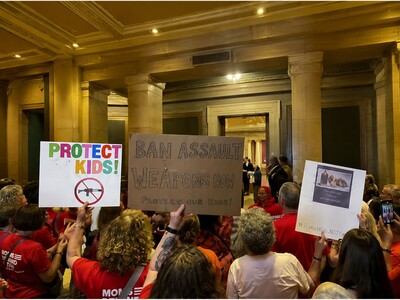

Minnesota risks taking a step backward on artificial intelligence policy — one that could leave students, small businesses, and nonprofits at a disadvantage. If lawmakers move forward with overly broad chatbot proposals, from strict compliance requirements to outright bans for minors, Minnesotans could lose access to tools they increasingly use every day.

As someone who works with companies and nonprofits on using AI effectively, I see the value of these tools firsthand. AI is helping small businesses operate more efficiently, nonprofits stretch limited budgets, and students explore new ideas and ways of learning. Much of that work happens through chat-based tools that help people answer questions, solve problems, and automate routine tasks like customer service or donor outreach.

That is why some of the chatbot proposals under consideration in Minnesota are raising concerns among the state’s growing community of AI developers, educators, and organizations using these tools responsibly.

While it is reasonable for lawmakers to establish safeguards for young people interacting with AI, some proposals go too far. The strictest versions would prohibit anyone under 18 from accessing broadly defined “recreational chatbots.” Others stop short of a ban but would still impose complex compliance obligations that could make it harder for developers to offer chatbots and for organizations to use them confidently.

That uncertainty creates real challenges, especially for smaller organizations without large legal or compliance departments. Even chatbots designed for straightforward purposes — like answering customer questions or helping nonprofits communicate with volunteers — could potentially fall under broad new rules. Faced with unclear standards and steep penalties for violations, some organizations may decide the risk is not worth it and stop using AI tools altogether.

Students could face the greatest long-term impact.

Recent national surveys show that most teenagers already use AI chatbots for homework help, research, and everyday questions. These tools are quickly becoming part of how students learn and prepare for college and future careers. Restricting access entirely is unlikely to stop young people from using AI, but it could leave some Minnesota students behind peers in neighboring states that are taking a more balanced approach.

Minnesota does not need to choose between protecting kids and preserving access to new technology.

Neighboring states are already showing there is another path forward. In Iowa, lawmakers recently advanced legislation that would require chatbots to disclose they are not human, establish safeguards around self-harm and inappropriate content, and give parents more oversight — all without banning access outright or creating unnecessary barriers for users and organizations.

That is the kind of balanced approach Minnesota should consider. Clear guardrails and reasonable protections make sense. Blanket bans and overly burdensome regulations do not. Minnesota should focus on helping young people learn how to use AI responsibly and safely, not forcing them to sit on the sidelines while these technologies become standard tools in education and the workplace.